Dealing with the pandemic, supply chain issues and move to electric vehicle production has seen a renewed focus on automation in the automotive industry. AWL experts, Gerhard Junte, Business Unit manager Automotive and Sander Lensen, Technology manager, discuss some of the trends driving this and how developments in vision systems can offer flexible solutions

We’re now seeing new developments in technologies that are enabling much more sophisticated applications around automation in the automotive industry. Could you provide some context as to where you see the trends in this market going?

Gerhard: There are a few trends in the market which make automation very attractive, especially around flexible automation. There are two main drivers for this: the scarcity of people and the uncertainty in volume prognoses from OEM’s. Also, there is a lot of interest in automating quality inspection.

The automotive factories had standstills during COVID because of falling demands. Also, people left the industry and made career changes. Our customers, the Tier 1s, are really struggling to get up to speed again and the chip crisis has added a lot of uncertainty to this speed-up.

It’s also the demographics. Young people do not want to spend time working at machines anymore, and that puts everything in perspective. The lack of people is a significant driver for the uptake of automation.

The other thing that we see, and COVID reinforced this, is the volatility in the automotive market. The planned volumes from the OEMs dropped and this was tough for Tier 1 suppliers. To be able to manage this volatility they are now looking for more flexible production where they produce multiple product variants with the same machine, and to make sure that machines can keep producing when volume requests are changing.

Next to the scarcity of people and the volatility in the automotive market, we see a third trend: quality inspection. The OEM’s demand more quality inspections in-line, with processes that are not so dependent on people. Daimler, for example, has very high requirements for quality inspection, which all brings the need for very flexible automation and vision.

Flexibility has always been important for the OEMs and the tier suppliers. Especially following the 2008 financial crash, everyone was talking about flexibility. Then we developed and we moved on, and now we get into the electrification phase and again it rises to the top of the priority list. In terms of solutions, how is a company like AWL responding to those things we’ve just discussed?

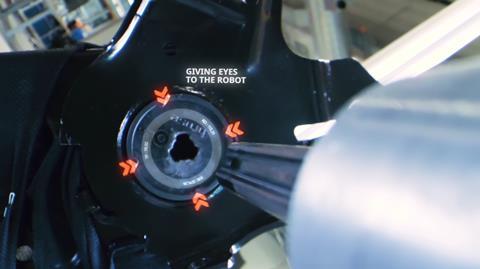

Gerhard: Through our R&D process, we are looking for innovations that offer more machine flexibility. A good example is in joining techniques. It is not that hard to create a gripper that can handle a considerable variation of products for different cars. But for laser welding the parts together accurately you need a very precise and product-specific fixture, which must clamp all the parts in a very rigid, inflexible fixture. So, the process demands a certain non-flexibility in the joining operation. To improve the flexibility of the process, we either must look for methods to quickly change over fixtures or get rid of the fixtures. A different joining technology would possibly give us the possibility to create a jig less production process. Next to a different joining process, the robot will also need ‘eyes’ to work with a jig less process.

Vision systems have been around a long time, but they are becoming more sophisticated. Can you offer some context on the developments of this technology?

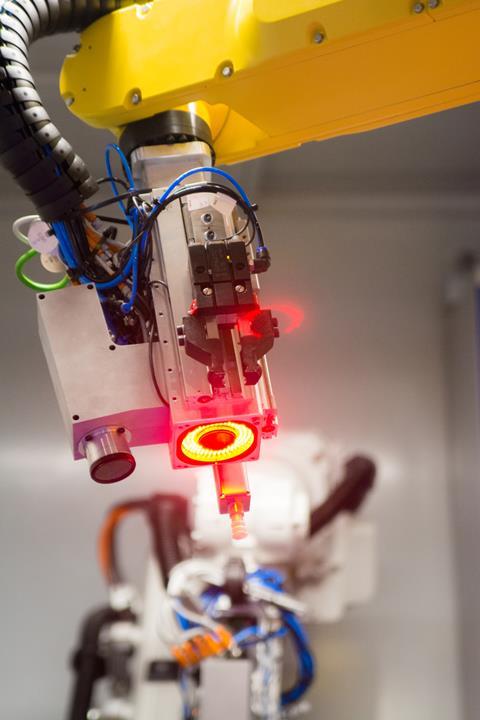

Sander: As a robotic integrator, we’ve provided vision systems to support our customers’ requirement to feed machines automatically. You need to understand vision technology to achieve a stable, robust production solution. It is more than just adding a camera. You need to know how to create reliable data with a vision sensor, and we have years of experience creating this knowledge .

We have seen that just adding a camera to a robot will not make a complete solution. So, you need expertise in three areas: creating a flexible gripper, understanding the robot’s behaviour and getting reliable data from a vision sensor. At AWL, we are expert in three domains: vision technology, engineering of grippers and the behaviour of robots. Combining expertise in these domains makes it possible to create a flexible, robust, on-speed production solution.

Could you offer a couple of examples around the kind of automated operations and vision systems that you’ve developed, and are working on now, that might have an automotive application?

Sander: In the past, there were a lot of manual operations, such as joining a fastener to a metal part, and because of the variety of products, it was not possible to automate the process. It was normal to see that as a manual process.

With the knowledge we’ve gained, we can develop a solution for that to grab any metal parts within the window we select. We grab and fasten it, mount them to each other without a jig and make that an automatic production process.

Another example would be an assembly process, where we need to attach two different products to each other. In this specific case, a product just lays on a belt without any accuracy and is not fixed. The product’s position can be detected with a high level of accuracy. After that, it is possible to mount a second part to it.

For a robot it’s quite complicated because it doesn’t feel or see anything , like a human does. When a human needs to do an assembly process, it feels how to mount a part to a product. For a robot, it is impossible to feel something, so it needs to do its movements more accurately. By adding vision to a robot, we proved that we were able to perform assembly processes, which were not expected to be possible.

We hear this phrase the ‘democratisation of automation’, where integration and operation of the automation is simplified allowing the tier suppliers or the OEMs to quickly re-task the robots. Is that another added value around these systems?

Sander: It is indeed the flexibility we want to add. In the past, we used to create a jig that was just made for one or two product variants. Then, when a third product variant came in, we needed to make a mechanical adjustment, we needed to reprogram the robot to produce a third part.

What we want to achieve is the mechanical solution that is already prepared for different applications and products; the only thing that changes is the product, which is detected by a vision system. So, our customers can add different products and be able to make minor modifications so that new products can be produced on the same line.

This is very important now, isn’t it? Tier suppliers and OEMs are still producing high volumes of ICE production, and now they also need to produce components for electric vehicles. They’re often having manage two completely different lines and bringing in new technologies.

Sander: Indeed. In the past, when we needed to add a different variant, we needed to reserve the machine for a refit to change it. If you just do it once a year, it’s not that big an issue, but if you need to change multiple times in a year, add a product multiple times in a year, you cannot wait for a weekend and have it done again. There’s a big need to make a flexible solution so that they can add new products themselves in just a day.

You’re putting a lot of development into vision systems. Where do you see the potential for this technology going forward?

Gerhard: We have machines in our factory which do more advanced tasks than we currently see in the automotive industry. It’s an entirely different world. We see logistics warehousing using deep-learning AI technology but automotive is perhaps more conservative in its approach, and we are only just starting with this in automotive.

Even if we only bring 20% of that technology into the automotive world, it will be revolutionary.

You mentioned this in your blog, Gerhard, that the learning from AI is transferable, it can be utilised in different applications. Do you see this technology coming into automotive at some point in?

Gerhard: Yes. For example, we make a lot of joining machines. The main areas where we have developed a flexible automation system, using vision technology are in the loading area, feeding the machines, reducing fixtures by allowing the robot to ‘look’ at the part and perform the process, and inspecting the part. This can be to measure the part completely in-line, but also look at, for example, the weld seams.

With weld seams, you already see deep learning coming in. Every weld seam is different, so it needs to recognise the variations and learn – this could be a good weld, but this is a bad weld.

This is usually a manual, visible check. An operator is checking (safety critical) welds of every welded part. This consumes time and we all know the concentration curve to scan the welds is declining very quickly. In the factory of the future, we can foresee an operator not doing this anymore.

OEMs are now requiring 100% traceability – they want to know details for every weld, and images to show if it is a good weld or not. And you must store this information for 10 years.

Sander: We are also talking about how to create a complete smart factory. As Gerhard said, AI is already being implemented into quality measurement, and we are increasing its use.

How can we make the machine more intelligent, that learns automatically, that it knows where welds need to be placed or which parts need to be placed?

A more adaptive machine, a more adaptive plant; I think, if we look forward to the next five years, we are moving in this direction of technology innovation.

Curious about how we see the future? Watch this video.

If you would like to know more about vision technology and its potential benefits for your company contact us via info@awl.nl or find out more about our solutions: awl.nl.