The Fraunhofer Institute for Production Technology has been one of the leading lights in developing the Industry 4.0 concept. Nick Holt discussed institute’s perspective on this with Dr. Thomas Bobek, coordinator of the Fraunhofer High Performance Center Networked Adaptive Production, and how he saw its future implementation in automotive manufacturing

What do you see as the aims of the Industry 4.0 (I4.0) concept?Overall I4.0 should provide the answers to a number of questions and provide help to certain areas. One such area is productivity, so having information on the status of the work-piece at all stages of the process allows informed decisions to be made regarding further operations on the work-piece. If you have all the information, for example on the part geometry, material composition, then you are able to derive the proper parameters for further processing. Currently we have some ‘hardware’ solutions for I4.0 but these are really only scratching the surface of what is possible.

What do you see as the aims of the Industry 4.0 (I4.0) concept?Overall I4.0 should provide the answers to a number of questions and provide help to certain areas. One such area is productivity, so having information on the status of the work-piece at all stages of the process allows informed decisions to be made regarding further operations on the work-piece. If you have all the information, for example on the part geometry, material composition, then you are able to derive the proper parameters for further processing. Currently we have some ‘hardware’ solutions for I4.0 but these are really only scratching the surface of what is possible.

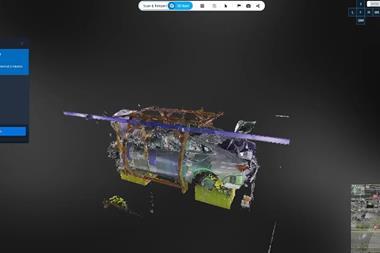

These systems are already in use but at Fraunhofer we believe there is a need to develop a deeper technological understanding of the production process. So, for example if we mill a part within certain parameters then we will produce a component that is of a certain level of quality. However, a deeper understanding of the process and materials will enable us to create better simulations and predict the quality of the final piece more accurately. However, there is still a lot of development needed. So we need to create new simulations for milling, turning, etc., new data models, such as our Digital Twin system that will collate all the information, and provide all the necessary networking between the different machines and workstations. Our goal is to connect the production systems to the working environment so that all the relevant information is easily available; offering a digital visualisation of the specific production process through the Digital Twin. For example, if we were working on process planning we would need to get the ‘cut model’ and the quality specifications for the final piece and from these we could derive the correct machining parameters.

This would create a ‘view’ for a process planner, someone who has to do quality assurance. He has to get an overlay of the cut model with the metrology data and has to check for any deviations and see if they meet the tolerances specified by the customer. So it’s critical to have clever visualisation software and access to the relevant information from the database.

What are the requirements in terms of the software and hardware to be able to implement this level of visualisation and control of the production process?Regarding the hardware it needs the machines (a milling machine for example) to be able to communicate the process data. Many now have a number of sensors monitoring the process but don’t have the capability to ‘share’ this data to the wider working environment. So it will be crucial to have the capability to retrieve this accumulated data from outside of the shopfloor. Machine builders are now beginning to prepare the equipment for this purpose, developing interfaces that allow access to the process data.

As an example this would allow you to compare the programmed axis positions with the actual executed axis positions. There can be small deviations between the two due to the machine dynamics; sometimes the heavy machinery cannot move through various axes as quickly as required by the program. We need to be able to access this information and track any deviations and there have been some developments in this direction, such as the latest versions of Siemens control systems. They allow data access via OPC UA, using a known, standardised protocol. This means you don’t have to adapt your production network for each new machine supplier.

Using one common protocol for communication will be a huge step forward. We need something similar to HTML, as used for communicating via the Internet, to allow the connection of any network of machines.

Does that common machine language exist or does it need to be developed?There are a number of standards currently used, such as MT Connect or OPC UA, etc., but in the next five years we will probably see this number reduce, helping to standardise the communication language. On the other side, computer systems used in workstations and offices will also need to be capable of using this common language in order to communicate with the machines. Software planning systems will need to offer this interface so, for example, a simulation is able to get ‘real’ data from a machining centre or metrology system to create an accurate simulation.

Do you think implementing this vision of I4.0 will change how the human element of the production operation approaches the process?This is hard to predict, but the systems that will be available should help to improve the quality of the decisions being made. The engineers or machine operators should be able to make more informed decisions or act faster. The automation process should provide information for the basis of the decision, but the final decision to begin production would be in the hands of the human worker. It’s unlikely that in the next decade we would see a move to 100% automation in all aspects of the production process; this isn’t realistic for complex parts.

So this is about the quality and depth of the information being provided by the networked machines and systems?Yes, you should get more insight into the process and if you have simulations that can determine the quality of the part after the production step, then you can make a virtual parameter variation and decide at a very early stage of the process planning what are the correct parameters.

At present you often have a number of iterative stages. So now you might produce something using the first set of process parameters but discover there is some issue and so have to continue to adjust those parameters until you get the desired finished part. With better simulation models you would be able to do these changes virtually, with perhaps no iterations needed to achieve the correct process. This approach goes very deeply into the technological description of the machining or additive process.

How does the term Big Data apply to I4.0?In the context of production Big Data you are recording all the data from the process of manufacturing a part; tracking the positions of machines axis, temperature, vibrations, etc.; basically everything that can be recorded. From this, machine-learning algorithms can sift the data looking for any correlations between, for example, the temperature on the shopfloor and deviations in the part’s dimensions. These might be correlations that engineers hadn’t been aware of before. The danger is that not every correlation is related to a specific issue, it may just be a coincidence.

We want to develop process knowledge so it’s easier to assess and decide if the correlation is actually an issue with the process settings or just a coincidence. This is where it is important for the engineer, the human element to make the final decision, and it’s something we don’t see changing in the near future.

Could you explain the concept of Service Orientated Architecture?It’s the development of a smart manufacturing network where we connect all the machines in the process chain through a common network. This will allow the exchange of data between the machines and provide the storage of and access to the data for the Digital Twin to create a data model of the component. This would then be available to all relevant persons to access perhaps through a company’s intranet.

Our aim is to have simulations, created from this machine data, actively used during the production process. So, as soon as the machines involved in the particular production operation provide metrology data or process data, it triggers the simulation automatically, which can provide information for the Digital Twin. This leads to a more complete insight into all the required aspects (quality, structure, geometry, materials, etc.) of the part being produced. Also by attaching a process planning system to this it then improves the flexibility of the production operations. If, for example, one machine is unable to perform its regular operation it allows the process planning system to communicate with all the available machines, access their full specifications, assess capabilities and availability and reassign the task accordingly. This should allow better decisions to be made and reduce down time.

Ideally the Digital Twin could be shared between all the companies in the value chain involved in the production of a component, each adding to the data model, enriching it. This would offer the customer a complete overview of all process steps that had been taken, giving them a better understanding of the final component, in terms of its design, structure, quality, etc.

Given the variations in equipment levels, types and sophistication across different machine shops, is there need for some level of standardisation in the hardware?Certainly you would need high-speed connections to transfer the data between the machines but there is more need for standardisation in the software rather than the hardware. You will need the right software interfaces to be able to efficiently import and export data. Another important factor is the ability to filter the data. In the real world it won’t be practical to transfer all of the data to all of the companies in the value chain, because each will have some proprietary processes that they might not be willing to share.So there has to be a solution to allow the relevant information for the next step of the process to be passed on while protecting the confidential information. There are solutions in development and I think system security and protection of intellectual property and confidential data will be important issues in the implementation of I4.0 in manufacturing industries. This might require a form of digital contract stipulating conditions for access and usage of the data being transferred between different companies.

What will be the next steps that need to be taken to progress the implementation of I4.0?There is definitely a need for greater standardisation across how data is gathered and transferred throughout the process chain. Standardising the data flow would be a big step forward for I4.0. The Digital Twin idea needs further development to improve the representation of the component being made. It needs to be available at all stages of the process, for use by those responsible for design, materials and manufacturing.

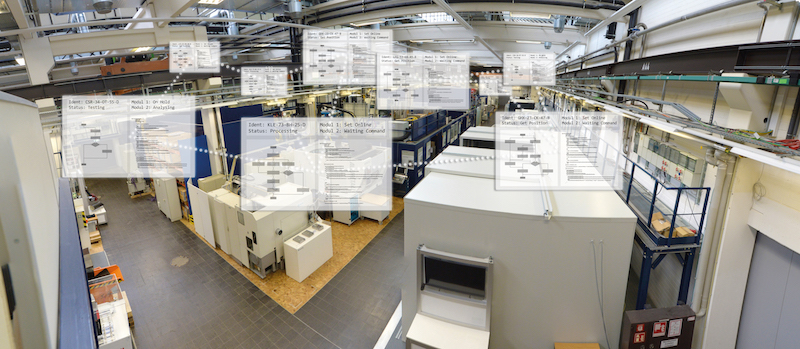

Hanover Fair 2017: Component with responsibilityAt this year’s Hanover Fair, Fraunhofer presented a new software development that allows each component to instruct the machines what operation to perform, separating it from the central production planning, and increasing the agility and flexibility of the production process.

The Fraunhofer IPT engineers in Aachen developed a production system in which each component carries the process information, which production steps it has to go through. This is called the "Service-oriented architecture for adaptive and networked production". The component behaves like an individual, so the information is stored which prescribes which production steps it is to pass through. It is only when a machining step is pending that the system selects from the machines with suitable skills the one that is most readily available.

The decisive factor is that every production step is used to determine which task has been performed and what the component has actually experienced, so the software records the production history for each individual component, creating a so-called Digital Twin. In order for the component to be recognised individually, it carries a QR code.

This strategy is especially important for companies that produce batches of different components. In a conventional production operation, systems have to be continually changed, reprogrammed and converted during the changeover to the new product. The service-oriented approach it’s the individual product that tells the devices what to do. "As a result of the networking of components and machines, companies will be able to produce one-offs in the future, even batches of 1," says Sven Jung, project manager at the Fraunhofer IPT to develop the new software. All the process data of the respective component is provided in the form of the Digital Twin in an intelligent manufacturing network, the "Smart Manufacturing Network". They allow you to analyse and re-use data sets, which increase process robustness and product quality.

Service-oriented software for flexible productionUsing the service-oriented software the order of the production process can be configured via a menu. For this purpose, the user draws individual work steps from the list of all services, which are derived from the production environment and thus from the production machines, by drag-and-drop into the desired process chain and places them together like building blocks. Down time due to a machine is avoided with service-oriented software since the ‘recipe’ of the Digital Twins stores the details of the next step, the component can be flexibly redirected to another machine that offers the next step. “Many machines can perform several tasks in one production line,” says Sven Jung. “A technically sophisticated 5-axis milling machine can, for example, also carry out the job of a simpler 3-axis milling machine. Within the Smart Manufacturing Network, the service-oriented software can flexibly decide in the future to do the job on the 5-axis machine, which is currently free.”