Simulation stimulates Ford's improvement

Mike Farish reports on Ford’s use of computer simulation to develop its manufacturing processes

Before any new engine goes into production within the Ford organisation in Europe, essential details about it will come to John Ladbrook’s team, based at the company’s Dunton Research Centre in the UK. Ladbrook is a simulation technical expert and a ‘black belt’ in Six Sigma process improvement. After 45 years of service at Ford, he now runs a team tasked with simulating the manufacturing and assembly operations necessary to make Ford’s engines, along with associated material movement and feed-in requirements.

The “engine” that drives their work is a software program called Witness, originally provided by external vendor company Lanner. Ford has worked with versions of this tool since it began the computerisation of its operations over 30 years ago.

As Ladbrook explains, his team comes into play after the design of the engine is finalised and passed through to the company’s manufacturing engineering operation to be made. “We don’t affect the design in any way,” he confirms.

Before Ladbrook and his immediate colleagues get involved, two other teams provide their input. The first of these decide the ‘process’ – in other words, precisely what type of manufacturing operations will be required to make the parts and assemble them into complete engines.

The second will then determine the ‘layout’ – quite simply, how much floorspace will be required to accommodate the necessary operations.

Ladbrook points out that given the inevitable pressures to get actual production operations underway as quickly as possible, these two intermediate sets of activities may not have been completed before his team get involved. However, this is not a situation he finds uncongenial. “We want to start work as soon as we can,” he explains. “The basic information we need is how many machines will be used and their cycle times.”

Ladbrook says that the broad responsibility of the simulation team is to ensure that the realities of production can be aligned with expectations. “We have to verify that the programme assumptions and the planning intent are achievable within the stipulated cost constraints,” he explains.

This task involves the creation of “models of machines” defined by performance data on factors such as as parts going in and coming out; cycle times; estimated breakdown rates; tool change requirements; estimated part quality – effectively a prediction of expected quality rates based on previous experience of the type of production task involved; buffering; and staffing.

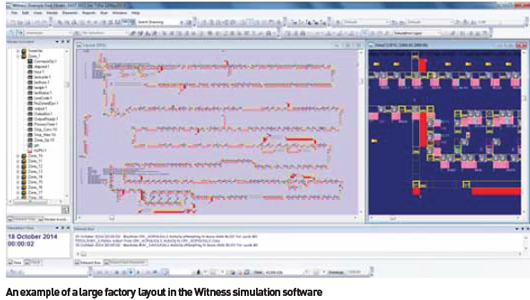

The modelling undertaken by the simulation team is not kinematic – they are not, for instance, simulating the physical actions of pick-and-place robots. Rather, they are modelling a process, which Ladbrook terms “discrete event simulation.” As such they work in 2D, not in the world of 3D virtual reality, which Ladbrook stresses would introduce a level of superfluous complexity with no apparent advantage.

Although the sophisticated Witness software is required to do some intensive ‘number crunching,’ the relative simplicity of the raw data that is fed into it means no complicated interface or input routines are required. Instead the ‘front end’ of the system, as it is used within Ford, uses procedures specified by the simulation team that are based on nothing more exotic than an Excel spreadsheet package.

This simplicity of procedure is no accident. Ford specified its own Excel-based interface for the software back in the late 1990s, initially called First, though its title has since changed to the equally pertinent Fast. “Fast is what we see and it helps us make changes quickly, but Witness still holds the logic, interprets the information and builds the model,” explains Ladbrook.

Fast also obviates the need for the constant re-entry of “input and output logic” for individual machines when new models are being built and this facilitation of re-use of data is a key element in the overall efficiency of system operation.

Another benefit, says Ladbrook, is that the individuals working with the system do not need modelling skills.

Instead they tend to have qualifications in areas such as manufacturing systems, giving them an understanding of the process they are modelling rather than just the means by which they are modelling it.

Ladbrook says that a typical recruit to the team with no previous experience of working with such a software system “can build and deliver models to the manufacturing engineering team within three months.”

A specialist from Lanner worked in-house at Ford for a year during the system’s development and as Ladbrooke describes the relationship between the two companies, it comes to resemble that between an OEM and a tier one supplier. “They are a partner and we have regular meetings with them to specify our requirements,” he says, though he adds that in the case of Fast, upgrades are now carried out in-house and that Ford itself owns the software.

Data loading and experimentation

Despite its simplicity, the quantity of data that has to be entered into the system is conside rable. A manufacturing line involving, say, 20 different operations would probably require in excess of 3,000 items of data, estimates Ladbrook, and simply getting all that loaded in would be likely to require five days of intensive work.

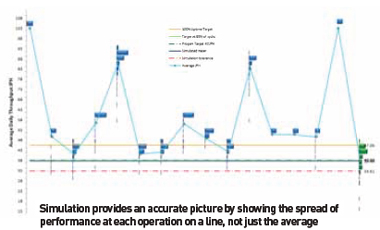

Once that work is done, it becomes possible to run what Ladbrooke terms “experiments” – various ‘what if?’ scenarios in which factors such as buffer size or cycle time can be varied and the effects on the overall operation of the intended line calculated. “Our objective is to make the line achieve the required jobs-per-hour target,” Ladbrook explains. “If we can’t make that target then we have to find a way of achieving it.”

The sheer volume of iterations that the team may carry out is considerable. Ladbrook says the set-up enables over 200 experiments to be performed in a single day. What is then made visible is on-screen information about the movement of parts and the status of machines, which members of the team have to monitor directly in order to understand how the model is performing. The operation of the system, therefore, while not a photo-realistic animation, is still actively graphic and requires the team members’ immediate attention in order to identify potential areas of concern in the model.

Ladbrook adds that his team’s involvement is not just a single episode in the overall process of designing and building a real-life production line. From the time the first Fast data is entered to the point at which a real engine comes off the line will be something in the order of three years. During that period team are likely to find themselves involved in four distinct phases of intensive work, each about three months long with other activities at a lower intensity in the intervening periods. They will also help support the actual launch of the line as “closing the loop” between simulation and operation helps refine model creation activities for the future.

Troubleshooting and improvement

The simulation team’s work is not limited to modelling new production lines. It can also be called on to help ‘troubleshoot’ existing lines that are deemed to be underperforming in some way. For example, the team were asked to help investigate unacceptable reject rates on a V6 engine production line at Ford’s plant in Cologne. The rejects were causing a bottleneck and the team were able to show how some extra buffering could ameliorate the situation. The root cause was found to be a mismatch in design specification between units intended for the US and European markets, therefore the problem was solved back at the product design stage, but Ladbrook says the simulation team’s work helped avoid any unnecessary fundamental change to the line itself. He adds that carrying out remedial work of this sort on existing lines is a useful way of keeping up to date with manufacturing technology as it is currently employed.

The simulation team can also be called in to help with improvement projects using the Six Sigma methodology.

The simulation team can also be called in to help with improvement projects using the Six Sigma methodology.

Ladbrook, himself an exponent, says that in this instance technique and technology are entirely compatible. “Six Sigma is about simulation,” he summises. In practical terms, the sort of qualitative improvement that the methodology may cause to be implemented at a microscopic level – such as the alteration of a procedure at a single point on a line – may have macroscopic consequences that affect overall production volumes. In such cases, the Witness software acts as a tool to predict whether these quantitative changes might occur.

As production technology changes it can have a major impact on the work of the simulation team and Ladbrook identifies one of biggest changes as the increasing adoption of manufacturing cells in place of long, continuous transfer lines. Part of the impact of this is purely quantitative – there is a lot more information about individual machines to be entered into the system – but there is also a qualitative element.

Ladbrook says that the associated material handling requirements of manufacturing cells can be much more complicated than the simple “one part in, one part out” routine associated with a machine on a transfer line. If there are, for example, “eight machines under a gantry... the logic of that gantry needs to be correct because that can affect the throughput of the cell – you have to figure out where it will pick a part up and where it will put it down.”

Ladbrook indicates that the increasing requirement to simulate the effects of logistical and other ancillary operations is having a bigger impact on the work of the team than more basic changes to manufacturing procedures. One example he cites is the frequency of tool changes.

Another is the operation of offline metrology routines, which he says can often cause a bottlenecks.

On another level, these last two examples indicate that the fundamental current trend is not towards fragmentation through the addition of extra functionality, but the opposite – integration through the construction of complete models in which every process is represented. Ladbrook concurs.

“We will eventually model the total plant,” he says, indicating that this will mean factoring in elements such as incoming and outgoing vehicles and demand from the body plant.

In fact, he says, some interesting work is already underway at Dunton along these lines to enable the inclusion in simulation models of information about energy consumption on the shopfloor. “We have got someone working on that and we have already got some results for people to work with,” he states, adding that if necessary he will ask Lanner to develop further appropriate functionality within the software.

A more distant prospect might even be the construction of models that incorporate information about manufacturing operations out in the supply chain. Ladbrook does not dismiss the idea and says that elsewhere within Ford there is some supplier input to the construction of manufacturing simulation models. In the meantime, more immediate likely developments will pose challenges that need to be solved. For instance, as models become more comprehensive it will become increasingly difficult for single individuals to understand and interact with them. “One person would not be able to control a total plant model,” Ladbrook advises.

In fact, some research work has already been commissioned at a UK university, aimed at enhancing the ease with which information generated by the model can be comprehended. “There is a lot of information in the model,” he states, adding that perhaps some of it is currently going to waste simply because people operating the system cannot absorb and act on it.

Ladbrook’s ambition, he says, is to initiate the development of what he terms “symbiotic modelling”, which would effectively mean that the software would become more autonomous; capable of updating itself and flagging up issues independently of human operators.

Ultimately, he indicates that the most fundamental change in the way that manufacturing simulation is used within Ford would be for it to become a “proactive” rather than “reactive” tool, employed much sooner in the development cycle for new manufacturing lines. It can be made to work effectively, he believes, when the data available is concerned exclusively with ‘process’ and not ‘layout.’ That would require some work to standardise processes and data, but it would, he is confident, lead to a real improvement in the overall efficiency of the development process.